Question: 4.6. Explain informally why the delta training rule in Equation (4.10) is only an approx- imation to the true gradient descent rule of Equation (4.7,).

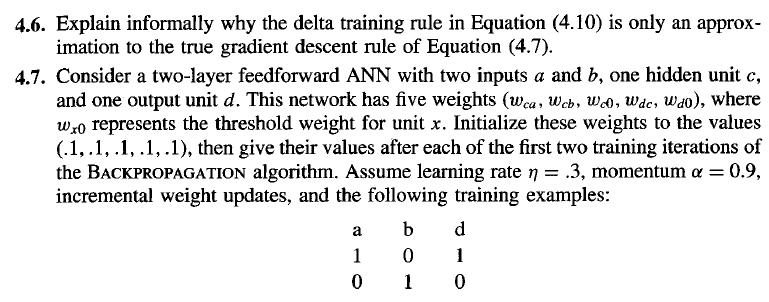

4.6. Explain informally why the delta training rule in Equation (4.10) is only an approx- imation to the true gradient descent rule of Equation (4.7,). 4.7. Consider a two-layer feedforward ANN with two inputs a and b, one hidden unit c, and one output unit d. This network has five weights (wca, Wcb, w.o, wde, Wao), where w,o represents the threshold weight for unit x. Initialize these weights to the values (.1,.1,.1,.1,.1), then give their values after each of the first two training iterations of the BACKPROPAGATION algorithm. Assume learning rate = .3, momentum = 0.9, incremental weight updates, and the following training examples: 4.6. Explain informally why the delta training rule in Equation (4.10) is only an approx- imation to the true gradient descent rule of Equation (4.7,). 4.7. Consider a two-layer feedforward ANN with two inputs a and b, one hidden unit c, and one output unit d. This network has five weights (wca, Wcb, w.o, wde, Wao), where w,o represents the threshold weight for unit x. Initialize these weights to the values (.1,.1,.1,.1,.1), then give their values after each of the first two training iterations of the BACKPROPAGATION algorithm. Assume learning rate = .3, momentum = 0.9, incremental weight updates, and the following training examples

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts