Question

Define a ConvNet architecture suitable for classifing your synthetic data Comments on the architecture: The convolutional layer is depicted as having exactly enough padding (implicitly

Define a ConvNet architecture suitable for classifing your synthetic data

Comments on the architecture:

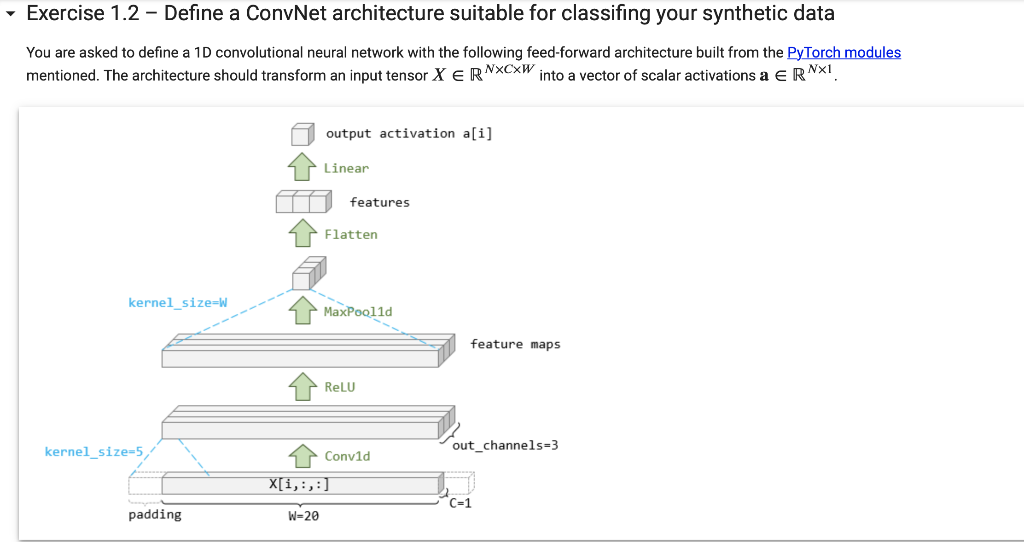

The convolutional layer is depicted as having exactly enough padding (implicitly zero) to ensure that the output feature maps have the same spatial length (=20) as the input vector had. Notice that if you were to increase the filter size by 2, you need to increase the padding by 1 (padding appears on both ends) to keep the output of convolution the same length as before. See the documentation for the Conv1d module.

The max pooling layer is being used here to take the maximum value across each of the 3 feature maps shown. Since we want to take the max over the entire spatial extent of the feature map, we use a large kernel_size.

The 1-dimensional convolution and pooling operations require spatial data in (,,) format (where is the spatial dimension), so that the operators know how long the spatial dimension is. However the linear (fully-connected) layer doesn't know how to deal with spatial data, so the flatten operation simply reshapes the tensor from shape (,,) to shape (,) where =.

Even though we'll use this architecture for binary classification, we do not add an extra sigmoid operation directly to the output. This is done for same reasons as for the multi-class PyTorch neural network from Lab 3. After the model is defined, we can still use it to predict binary class probabilities [0,1] by feeding features through the model and then feeding the resulting activations through a sigmoid so that class predictions are =().

Add a few lines of code to define a PyTorch model with the above architecture.

code

torch.manual_seed(0) # Ensure model weights initialized with same random numbers

num_filter = 3 # The number of filters to learn

filter_size = 5 # The size of each filter

model = torch.nn.Sequential(

torch.nn.Conv1d(1, num_filter, filter_size),

torch.nn.ReLU(),

torch.nn.MaxPool1d(2),

torch.nn.Flatten(),

torch.nn.Linear(in_features=num_filter*3, out_features=10)

)

this part is wrong , fix it please

assert len(model) == 5, "Should be 5 layers!"

assert isinstance(model[0], torch.nn.Conv1d), "layer 0 should be Conv1d"

assert model[0].in_channels == C, "layer 0 should expect C input channel"

assert model[0].out_channels == num_filter, "layer 0 should expect %d output channels" % num_filter

assert model[0].kernel_size[0] == filter_size, "layer 0 filter size should be %d" % filter_size

assert model[0].padding[0] == filter_size//2

assert isinstance(model[1], torch.nn.ReLU), "layer 1 should be ReLU"

assert isinstance(model[2], torch.nn.MaxPool1d), "layer 2 should be MaxPool1d"

assert model[2].kernel_size == W, "layer 2 should pool over the entire input feature map"

assert isinstance(model[3], torch.nn.Flatten), "layer 3 should by Flatten"

assert isinstance(model[4], torch.nn.Linear), "layer 4 should be Linear"

assert model[4].in_features == num_filter, "layer 4 should have accept %d inputs" % num_filter

assert model[4].out_features == 1, "layer 4 should have only 1 output"

print("Looks OK!")

Exercise 1.2 - Define a ConvNet architecture suitable for classifing your synthetic data You are asked to define a 1D convolutional neural network with the following feed-forward architecture built from the PyTorch modules mentioned. The architecture should transform an input tensor XRNCW into a vector of scalar activations aRN1

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started