Question

There is a mathematical challenge of learning long-term dependencies in recurrent networks: gradients propagated over many stages tend to either vanish (most of the

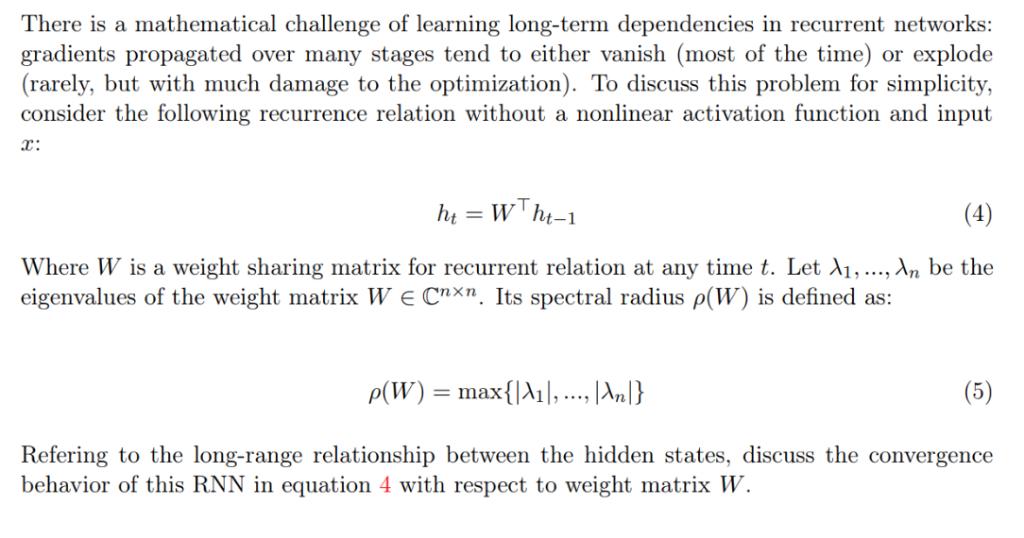

There is a mathematical challenge of learning long-term dependencies in recurrent networks: gradients propagated over many stages tend to either vanish (most of the time) or explode (rarely, but with much damage to the optimization). To discuss this problem for simplicity, consider the following recurrence relation without a nonlinear activation function and input x: ht=Wht-1 (4) Where W is a weight sharing matrix for recurrent relation at any time t. Let A,..., An be the eigenvalues of the weight matrix WE Cnxn. Its spectral radius p(W) is defined as: p(W) = max{|A|, ..., |An|} Refering to the long-range relationship between the hidden states, discuss the convergence behavior of this RNN in equation 4 with respect to weight matrix W.

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get StartedRecommended Textbook for

Numerical Analysis

Authors: Richard L. Burden, J. Douglas Faires

9th edition

538733519, 978-1133169338, 1133169333, 978-0538733519

Students also viewed these Programming questions

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

Question

Answered: 1 week ago

View Answer in SolutionInn App