Answered step by step

Verified Expert Solution

Question

1 Approved Answer

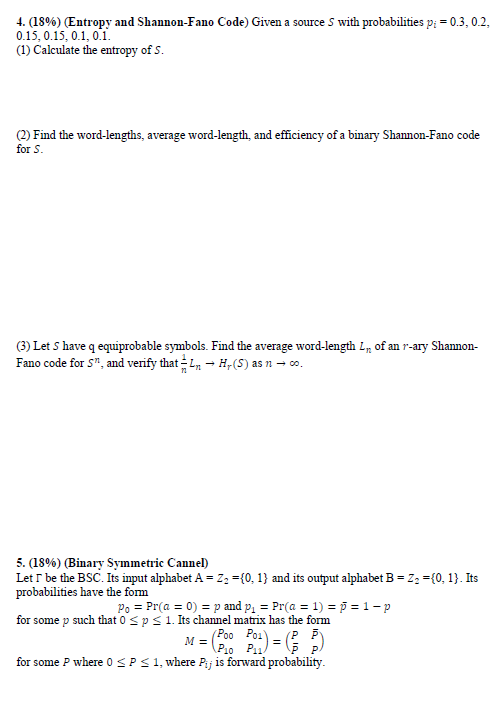

This is a Coding & Information Theory question 4. (18%) (Entropy and Shannon-Fano Code) Given a source 5 with probabilities p; = 0.3, 0.2, 0.15

This is a Coding & Information Theory question

4. (18%) (Entropy and Shannon-Fano Code) Given a source 5 with probabilities p; = 0.3, 0.2, 0.15 0.15, 0.1, 0.1 (1) Calculate the entropy of S. (2) Find the word-lengths, average word-length, and efficiency of a binary Shannon-Fano code for S. (3) Let S have q equiprobable symbols. Find the average word-length L, of an r-ary Shannon- Fano code for s", and verify that 1. H,(S) as n - .. 5. (18%) (Binary Symmetric Cannel) Let I be the BSC. Its input alphabet A = Z2 = {0, 1} and its output alphabet B = 2: ={0,1}. Its probabilities have the form Po = Pr(a = 0) = p and p. = Pr(a = 1) = 0 =1-p for some p such that 0Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started