This is the question, I included page 354 it referred to also

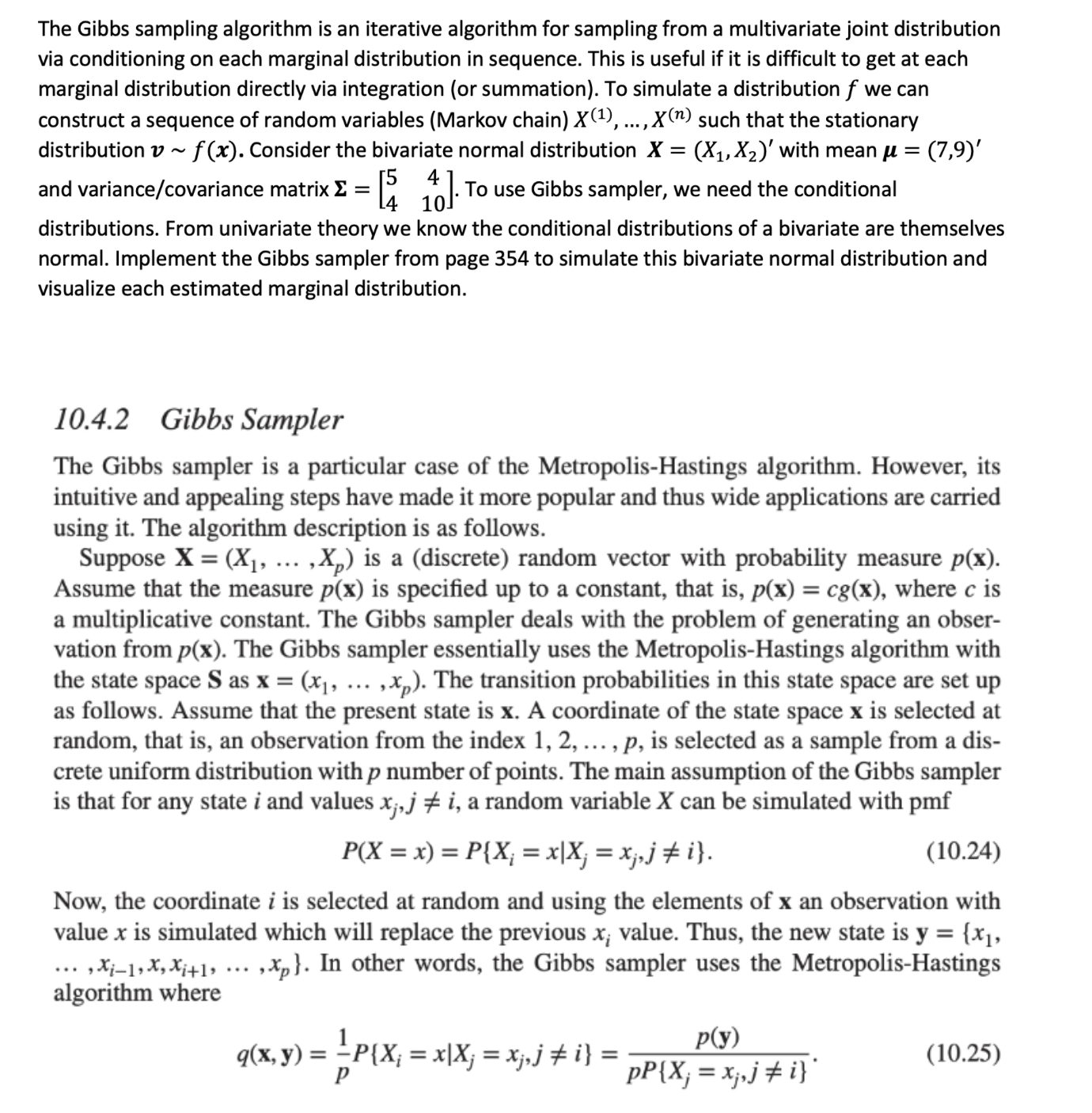

The Gibbs sampling algorithm is an iterative algorithm for sampling from a multivariate joint distribution via conditioning on each marginal distribution in sequence. This is useful if it is difcult to get at each marginal distribution directly via integration (or summation). To simulate a distribution f we can construct a sequence of random variables (Markov chain) X (1), ...,X(") such that the stationary distribution 11 ~ f (3:). Consider the bivariate normal distribution X = (X1,X2)' with mean 'u = (7,9)' 5 4- 4- 10 distributions. From univariate theory we know the conditional distributions of a bivariate are themselves normal. Implement the Gibbs sampler from page 354 to simulate this bivariate normal distribution and visualize each estimated marginal distribution. and variance/covariance matrix I: = [ ]. To use Gibbs sampler, we need the conditional 10.4.2 Gibbs Sampler The Gibbs sampler is a particular case of the Metropolis-Hastings algorithm. However, its intuitive and appealing steps have made it more popular and thus wide applications are carried using it. The algorithm description is as follows. Suppose X = (XI, ,XP) is a (discrete) random vector with probability measure p(x). Assume that the measure p(x) is specied up to a constant. that is. p(x) = 030:). where c is a multiplicative constant. The Gibbs sampler deals with the problem of generating an obser- vation from p(x). The Gibbs sampler essentially uses the Metropolis-Hastings algorithm with the state space S as x = (x1, ,xp). The transition probabilities in this state space are set up as follows. Assume that the present state is x. A coordinate of the state space x is selected at random, that is, an observation from the index 1, 2, . . . , p, is selected as a sample from a dis- crete uniform distribution with p number of points. The main assumption of the Gibbs sampler is that for any state i and values x}, j 515 i. a random variable X can be simulated with pmf P(X=x)=P{X,. =x|Xj = ne 1'}. (10.24) Now, the coordinate i is selected at random and using the elements of at an observation with value x is simulated which will replace the previous x,- value. Thus, the new state is y = [x], , Hung\