Answered step by step

Verified Expert Solution

Question

1 Approved Answer

use python plz Expected Output Here are a few examples of the output of this function. Because of randomness, your results won't look exactly like

use python plz

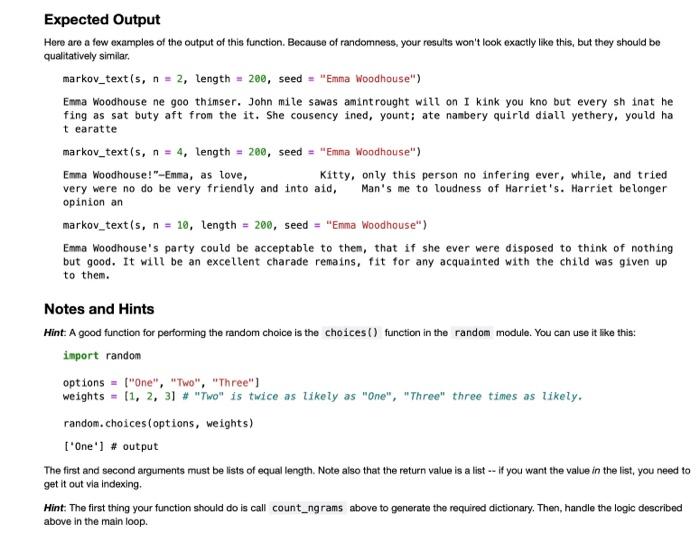

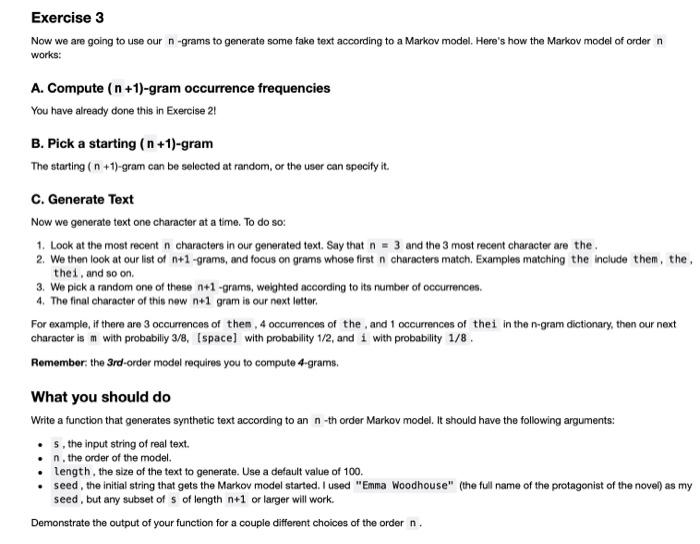

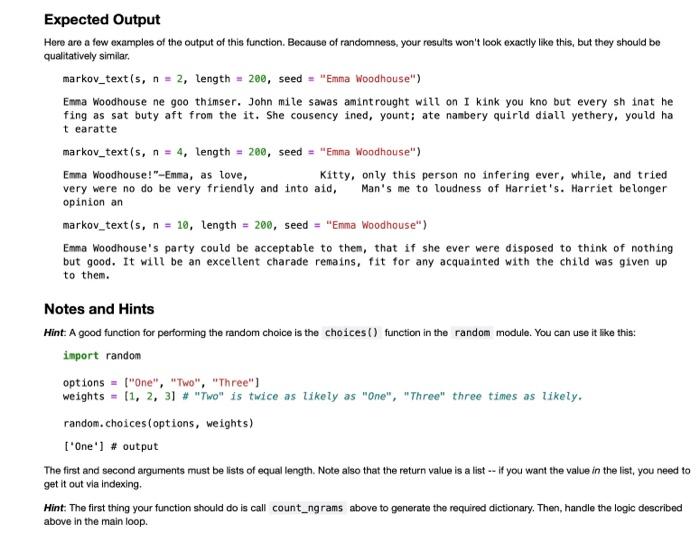

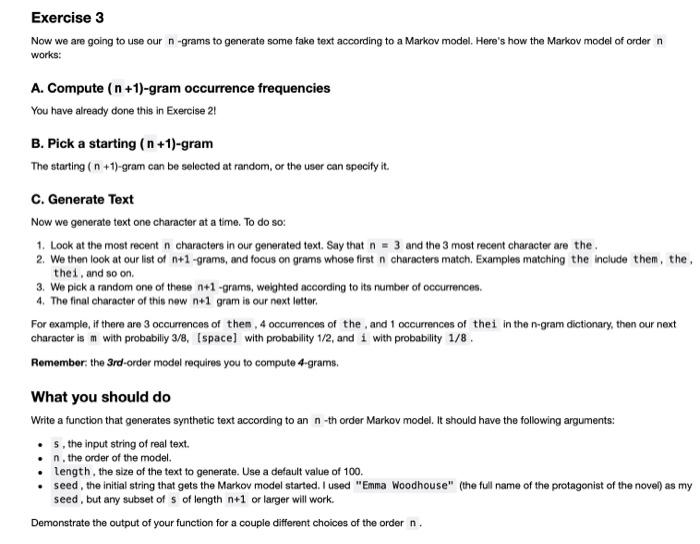

Expected Output Here are a few examples of the output of this function. Because of randomness, your results won't look exactly like this, but they should be qualitatively similar markov_text(s, n = 2, length = 200, seed = "Emma Woodhouse") Emma Woodhouse ne goo thimser. John mite sawas amint rought will on I kink you kno but every sh inat he fing as sat buty aft from the it. She cousency ined, yount; ate nambery quirld diall yethery, yould ha tearatte markov_text(s, n = 4, length = 200, seed = "Emma Woodhouse") Emma Woodhouse!"-Emma, as love, Kitty, only this person no infering ever, white, and tried very were no do be very friendly and into aid, Man's me to loudness of Harriet's. Harriet belonger opinion an markov_text(s, n = 10, length = 200, seed = "Emma Woodhouse") Emma Woodhouse's party could be acceptable to them, that if she ever were disposed to think of nothing but good. It will be an excellent charade remains, fit for any acquainted with the child was given up to them. Notes and Hints Hint. A good function for performing the random choice is the choices() function in the random module. You can use it like this: import random options = "One", "Two", "Three") weights = [1, 2, 3] # "TW" is twice as likely as "One", "Three" three times as likely. random.choices (options, weights) ["One'] # output The first and second arguments must be lists of equal length. Note also that the return value is a list -- if you want the value in the list, you need to get it out via indexing Hint. The first thing your function should do is call count_ngrams above to generate the required dictionary. Then, handle the logic described above in the main loop. a Exercise 3 Now we are going to use our n-grams to generate some fake text according to a Markov model. Here's how the Markov model of ordern works: A. Compute ( n +1)-gram occurrence frequencies You have already done this in Exercise 2! B. Pick a starting (n +1)-gram The starting (n + 1)-gram can be selected at random, or the user can specify it. C. Generate Text Now we generate text one character at a time. To do so: 1. Look at the most recent n characters in our generated text. Say that n = 3 and the 3 most recent character are the 2. We then look at our list of n+1 -grams, and focus on grams whose first n characters match. Examples matching the include them, the. thes, and so on. 3. We pick a random one of these n+1 -grams, weighted according to its number of occurrences. 4. The final character of this new n+1 gram is our next letter. For example, if there are 3 occurrences of them, 4 occurrences of the and 1 occurrences of thei in the n-gram dictionary, then our next character is m with probabiliy 3/8, Ispace with probability 1/2, and i with probability 1/8 Remember the 3rd-order model requires you to compute 4-grams. What you should do Write a function that generates synthetic text according to an n-th order Markov model. It should have the following arguments: s, the input string of real text. . n. the order of the model. length, the size of the text to generate. Use a default value of 100. seed, the initial string that gets the Markov model started. I used "Emma Woodhouse" (the full name of the protagonist of the novel as my seed, but any subset of of length n+1 or larger will work. Demonstrate the output of your function for a couple different choices of the order n

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started