3.4.12 A Markov chain X0;X1;X2; : : : has the transition probability matrix and is known to...

Question:

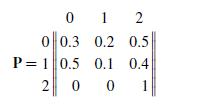

3.4.12 A Markov chain X0;X1;X2; : : : has the transition probability matrix

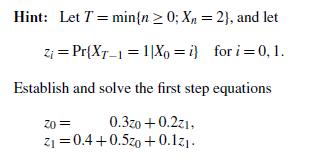

and is known to start in state X0 D 0. Eventually, the process will end up in state 2. What is the probability that when the process moves into state 2, it does so from state 1?

Fantastic news! We've Found the answer you've been seeking!

Step by Step Answer:

Related Book For

An Introduction To Stochastic Modeling

ISBN: 9780233814162

4th Edition

Authors: Mark A. Pinsky, Samuel Karlin

Question Posted: