Answered step by step

Verified Expert Solution

Question

1 Approved Answer

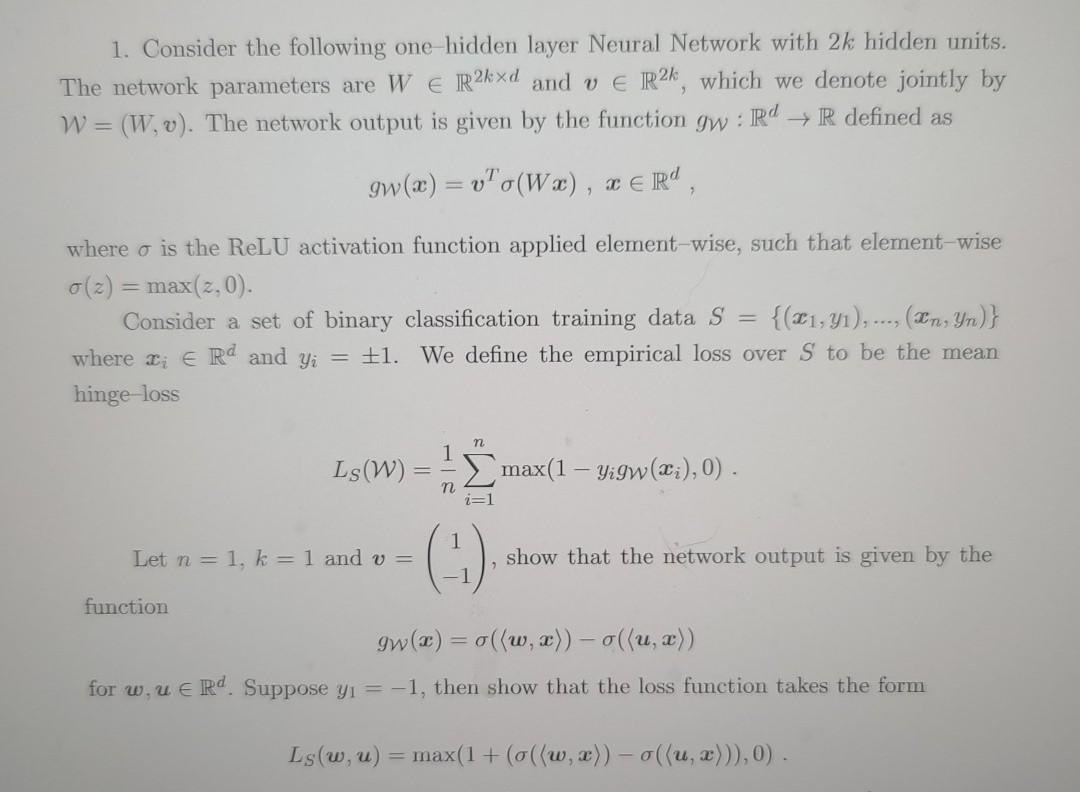

answer problem 2 1. Consider the following one-hidden layer Neural Network with 2k hidden units. The network parameters are W E R2kxd and ve R2k,

answer problem 2

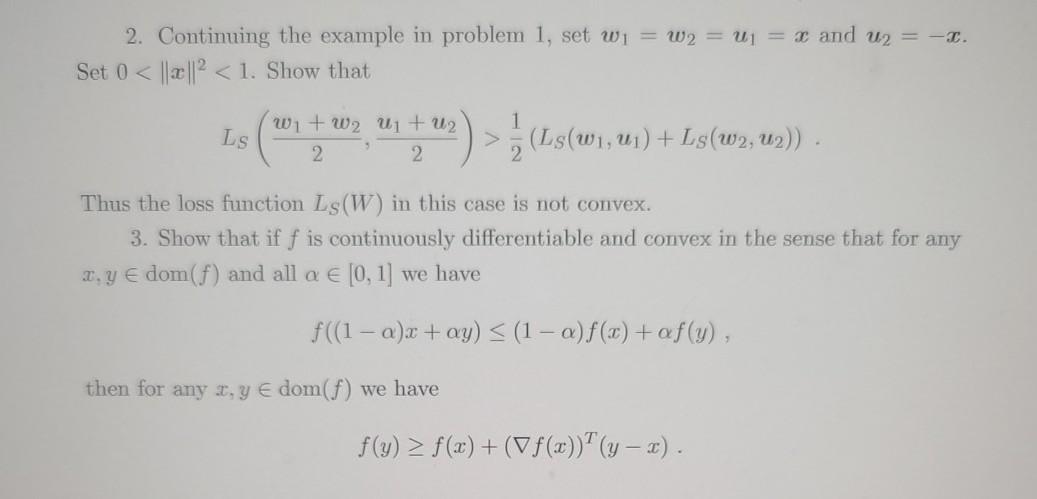

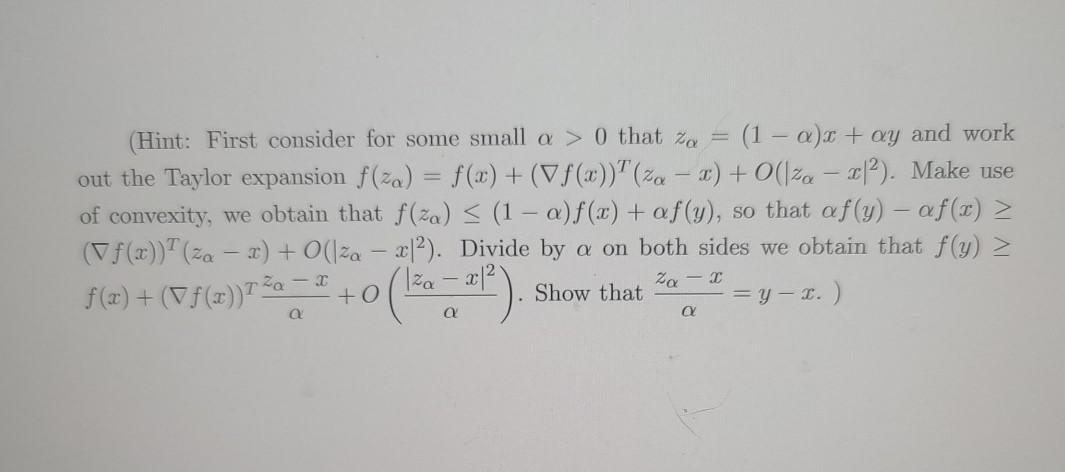

1. Consider the following one-hidden layer Neural Network with 2k hidden units. The network parameters are W E R2kxd and ve R2k, which we denote jointly by W = (W,v). The network output is given by the function gw : Rd R defined as gw(x) = v"o(Wa), x CER where o is the ReLU activation function applied element-wise, such that element-wise 0(z) = max(2.0). Consider a set of binary classification training data s {(21,41), ..., (In, Yn)} where di E Rd and yi = +1. We define the empirical loss over S to be the mean hinge loss n Ls (W) max(1 yigw({}),0) n Let n = 1. k = 1 and v= (-) show that the network output is given by the function gw(x) = o((w, x)) - ou,x)) for w.u e Rd. Suppose y = -1, then show that the loss function takes the form Is (w, u) = max(1 + (o({w,x)) - o((u, x))), 0). 2. Continuing the example in problem 1, set wi = w2 = U1 = x and u2 = -1. Set 0 ] (1500), us) + Listwa, uz) Thus the loss function Ls(W) in this case is not convex. 3. Show that if f is continuously differentiable and convex in the sense that for any y dom(f) and all a [0, 1] we have f((1 - ax + ay) f(x) + (V f(x))(y - x). (Hint: First consider for some small a > 0 that zo = (1 - ax + ay and work out the Taylor expansion f(za) = f(x) + (Vf(x))+ (%- ) + (170 2012). Make use of convexity, we obtain that f(za) = (1 - a)f(x) + af(y), so that af(y) - af(x) > (f(x))+ (za - 2) + O(lza x12). Divide by a on both sides we obtain that f(y) > f(x) + (f(x))72a +I + Iza - x12 Show that =y- 1.) za - (137*) a 1. Consider the following one-hidden layer Neural Network with 2k hidden units. The network parameters are W E R2kxd and ve R2k, which we denote jointly by W = (W,v). The network output is given by the function gw : Rd R defined as gw(x) = v"o(Wa), x CER where o is the ReLU activation function applied element-wise, such that element-wise 0(z) = max(2.0). Consider a set of binary classification training data s {(21,41), ..., (In, Yn)} where di E Rd and yi = +1. We define the empirical loss over S to be the mean hinge loss n Ls (W) max(1 yigw({}),0) n Let n = 1. k = 1 and v= (-) show that the network output is given by the function gw(x) = o((w, x)) - ou,x)) for w.u e Rd. Suppose y = -1, then show that the loss function takes the form Is (w, u) = max(1 + (o({w,x)) - o((u, x))), 0). 2. Continuing the example in problem 1, set wi = w2 = U1 = x and u2 = -1. Set 0 ] (1500), us) + Listwa, uz) Thus the loss function Ls(W) in this case is not convex. 3. Show that if f is continuously differentiable and convex in the sense that for any y dom(f) and all a [0, 1] we have f((1 - ax + ay) f(x) + (V f(x))(y - x). (Hint: First consider for some small a > 0 that zo = (1 - ax + ay and work out the Taylor expansion f(za) = f(x) + (Vf(x))+ (%- ) + (170 2012). Make use of convexity, we obtain that f(za) = (1 - a)f(x) + af(y), so that af(y) - af(x) > (f(x))+ (za - 2) + O(lza x12). Divide by a on both sides we obtain that f(y) > f(x) + (f(x))72a +I + Iza - x12 Show that =y- 1.) za - (137*) aStep by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started