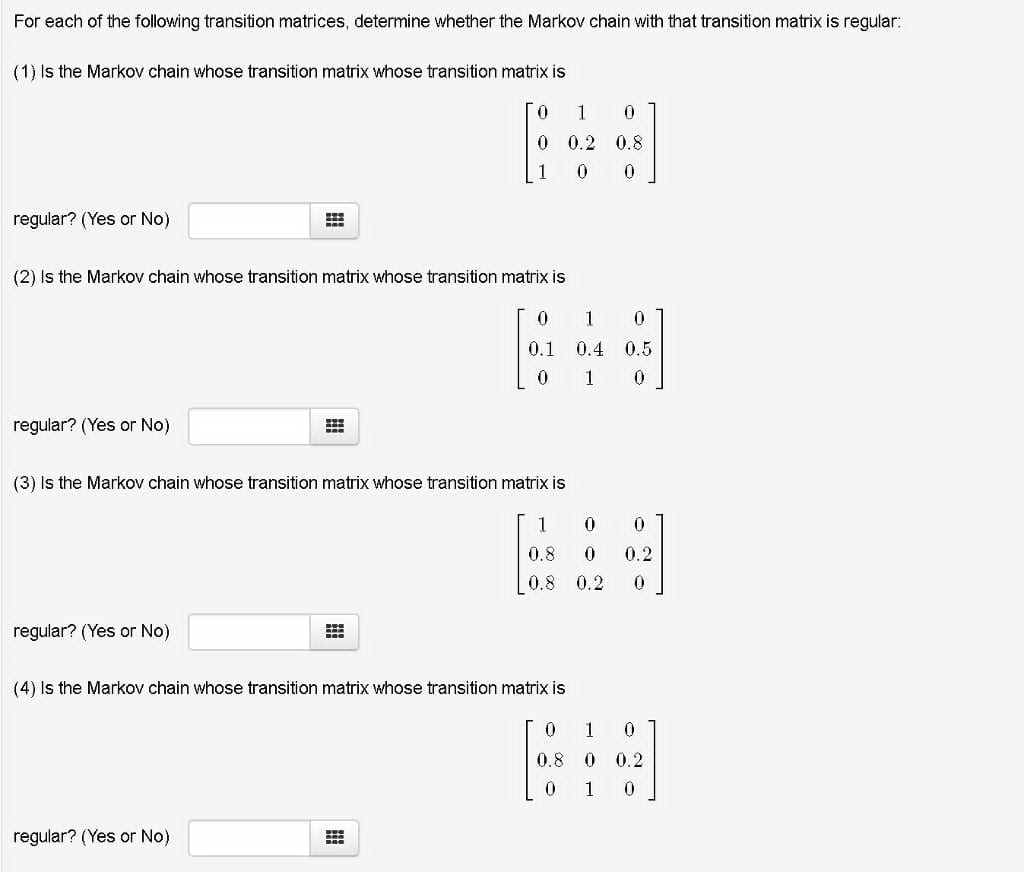

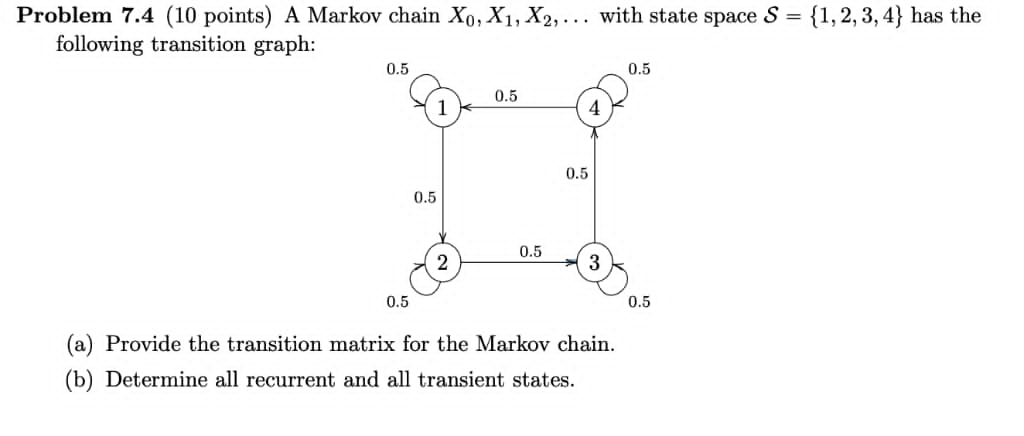

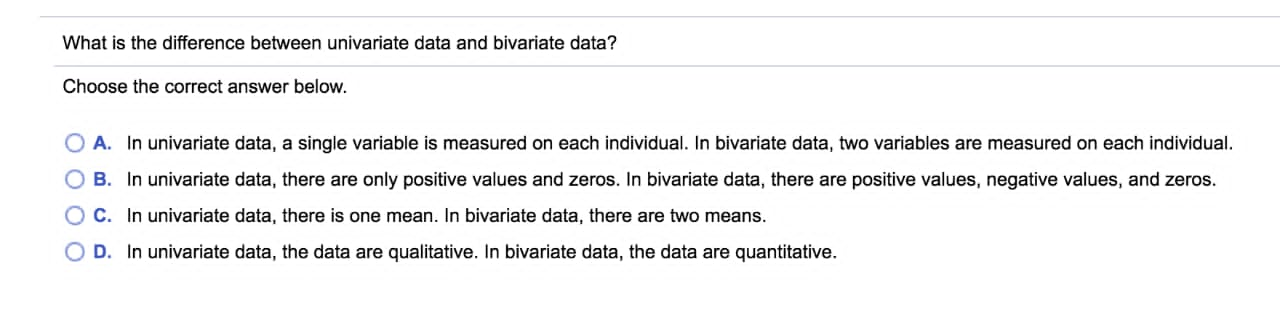

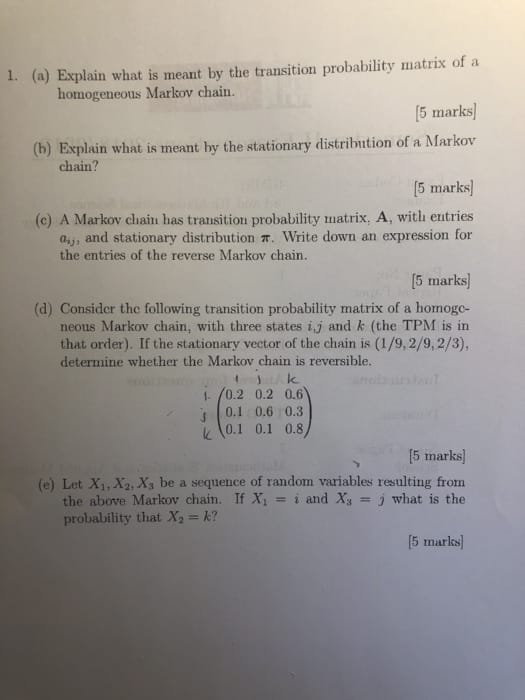

For each of the following transition matrices. determine whether the Markov chain with that transition matrix is regular: (1) is the Markov chain whose transition matrix whose transition matrix Is 0 1 0 0 0.2 0.8 1 0 [J regular? (Yes or No) 5 (2) is the Markovr chain whose transition matrix-whose transition matrix is 0 1 0 [L1 11.4 0.5 0 1 0 regular? (Yes or No) - E (3) Is the Markov chain whose transition matrix whose transition matrix is 1 O 0 0.8 0 0.2 0.8 0.2 0 regular? (Yes or No) (4) is the Markov chain whose transition matrix vmose transition matrix is 0 1 0 08 0 02 O 1 0 regular? (Yes or No) Problem 7.4 (10 points) A Markov chain X0,X1,X2,. . . with state space S = {1,21 3,4} has the following transition graph: (3) Provide the transition matrix for the Markov chain. (b) Determine all recurrent and all transient states. What is the difference between univariate data and bivariate data? Choose the correct answer below. O A. In univariate data, a single variable is measured on each individual. In bivariate data, two variables are measured on each individual. O B. In univariate data, there are only positive values and zeros. In bivariate data, there are positive values, negative values, and zeros. C. In univariate data, there is one mean. In bivariate data, there are two means. O D. In univariate data, the data are qualitative. In bivariate data, the data are quantitative.1. (a) Explain what is meant by the transition probability matrix of a homogeneous Markov chain. [5 marks] (b) Explain what is meant by the stationary distribution of a Markov chain? [5 marks] (c) A Markov chain has transition probability matrix, A, with entries ay, and stationary distribution . Write down an expression for the entries of the reverse Markov chain. [5 marks (d) Consider the following transition probability matrix of a homogo- neous Markov chain, with three states i,j and & (the TPM is in that order). If the stationary vector of the chain is (1/9, 2/9, 2/3), determine whether the Markov chain is reversible. 1 Ik 1- /0.2 0.2 0.6 0.1 0.6 0.3 K 0.1 0.1 0.8 [5 marks] (e) Let X1, X2, X, be a sequence of random variables resulting from the above Markov chain. If X = i and Xy = j what is the probability that X2 = k? [5 marks]