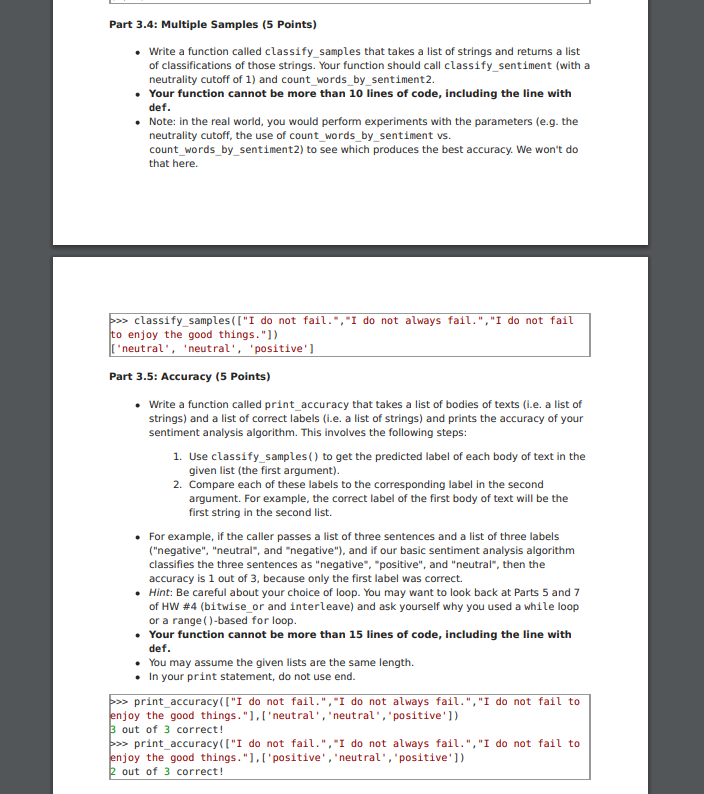

Part 3.4: Multiple Samples (5 Points) . Write a function called classify_samples that takes a list of strings and returns a list of classifications of those strings. Your function should call classify_sentiment (with a neutrality cutoff of 1) and count_words_by_sentiment2. Your function cannot be more than 10 lines of code, including the line with def. Note: in the real world, you would perform experiments with the parameters (e.g. the neutrality cutoff, the use of count_words_by_sentiment vs. count_words_by_sentiment2) to see which produces the best accuracy. We won't do that here. a> classify_samples(["I do not fail.", "I do not always fail. ", "I do not fail to enjoy the good things. " ]) [ 'neutral' , 'neutral', 'positive' ] Part 3.5: Accuracy (5 Points) . Write a function called print_accuracy that takes a list of bodies of texts (i.e. a list of strings) and a list of correct labels (i.e. a list of strings) and prints the accuracy of your sentiment analysis algorithm. This involves the following steps: 1. Use classify_samples ( ) to get the predicted label of each body of text in the given list (the first argument). 2. Compare each of these labels to the corresponding label in the second argument. For example, the correct label of the first body of text will be the first string in the second list. . For example, if the caller passes a list of three sentences and a list of three labels ("negative", "neutral", and "negative"), and if our basic sentiment analysis algorithm classifies the three sentences as "negative", "positive", and "neutral", then the accuracy is 1 out of 3, because only the first label was correct. Hint: Be careful about your choice of loop. You may want to look back at Parts 5 and 7 of HW #4 (bitwise_or and interleave) and ask yourself why you used a while loop or a range ( )-based for loop. Your function cannot be more than 15 lines of code, including the line with def. You may assume the given lists are the same length. . In your print statement, do not use end. paw print_accuracy(["I do not fail. ", "I do not always fail. ", "I do not fail to enjoy the good things. " ], [ 'neutral' , 'neutral', "positive' ]) 3 out of 3 correct! => print_accuracy(["I do not fail.", "I do not always fail. ", "I do not fail to enjoy the good things. "], [ 'positive' , 'neutral' , "positive' ]) 2 out of 3 correct