Answered step by step

Verified Expert Solution

Question

1 Approved Answer

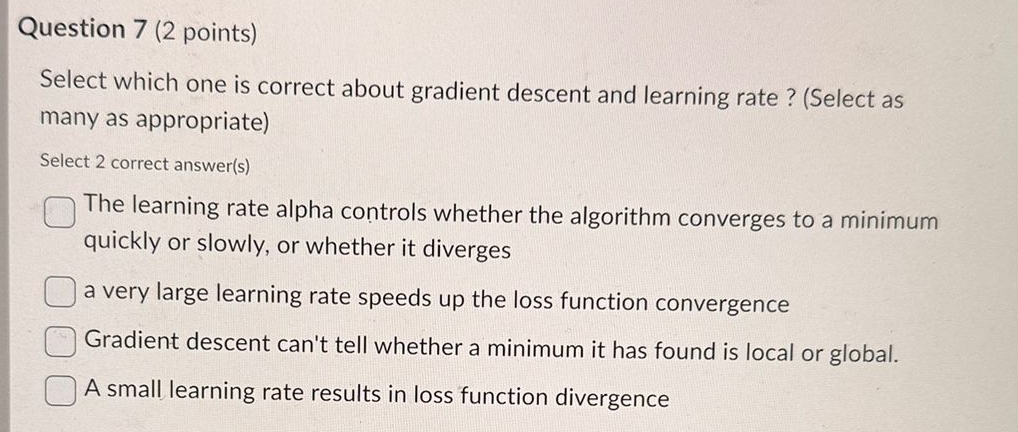

Question 7 ( 2 points ) Select which one is correct about gradient descent and learning rate? ( Select as many as appropriate ) Select

Question points

Select which one is correct about gradient descent and learning rate? Select as many as appropriate

Select correct answers

The learning rate alpha controls whether the algorithm converges to a minimum quickly or slowly, or whether it diverges

a very large learning rate speeds up the loss function convergence

Gradient descent can't tell whether a minimum it has found is local or global.

A small learning rate results in loss function divergence

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started