Use reinforcement learning to solve this problem.

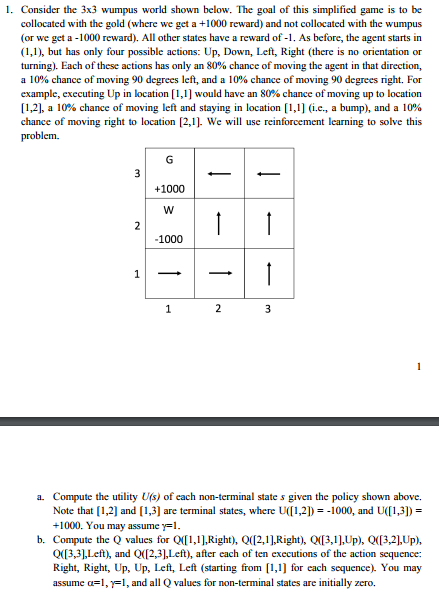

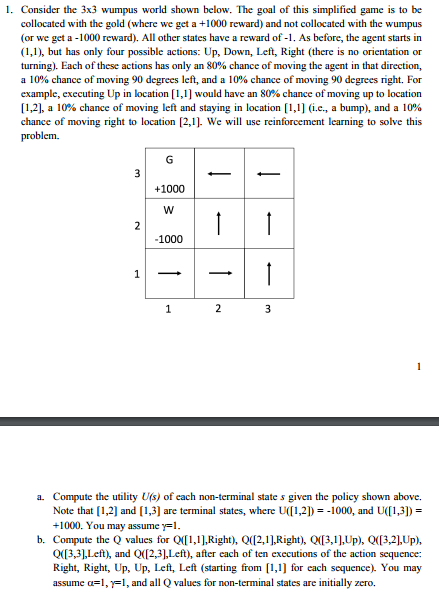

1. Consider the 3x3 wumpus world shown below. The goal of this simplified game is to be collocated with the gold (where we get a +1000 reward) and not collocated with the wumpus (or we get a -1000 reward). All other states have a reward of. As before, the agent starts in (1,1), but has only four possible actions: Up, Down, Left, Right (there is no orientation or turning). Each of these actions has only an 80% chance of moving the agent in that direction, a 10% chance of moving 90 degrees left, and a 10% chance of moving 90 degrees right. For example, executing Up in location [1,1] would have an 80% chance of moving up to location [1,2], a 10% chance of moving left and staying in location [1,1] (i.e., a bump), and a 10% chance of moving right to location [2,1 We will use reinforcement learning to solve this problem. +1000 1000 a. Compute the utility U(s) of each non-terminal state s given the policy shown above. Note that [1,2] and [13] are terminal states, where U([1,2],--1000, and U([13],- +1000. You may assume -1. b. Compute the Q values for Q([ 1,1 ],Right), Q([2,1 ],Right), Q([3,1],Up), Q([3,2],Up), Q(3,3,Left, and Q(2,3,Left), after each of ten executions of the action sequence Right, Right, Up, Up, Left, Left (starting from1, for each sequence). You may assume =1, 1, and all Q values for non-terminal states are initially zero. 1. Consider the 3x3 wumpus world shown below. The goal of this simplified game is to be collocated with the gold (where we get a +1000 reward) and not collocated with the wumpus (or we get a -1000 reward). All other states have a reward of. As before, the agent starts in (1,1), but has only four possible actions: Up, Down, Left, Right (there is no orientation or turning). Each of these actions has only an 80% chance of moving the agent in that direction, a 10% chance of moving 90 degrees left, and a 10% chance of moving 90 degrees right. For example, executing Up in location [1,1] would have an 80% chance of moving up to location [1,2], a 10% chance of moving left and staying in location [1,1] (i.e., a bump), and a 10% chance of moving right to location [2,1 We will use reinforcement learning to solve this problem. +1000 1000 a. Compute the utility U(s) of each non-terminal state s given the policy shown above. Note that [1,2] and [13] are terminal states, where U([1,2],--1000, and U([13],- +1000. You may assume -1. b. Compute the Q values for Q([ 1,1 ],Right), Q([2,1 ],Right), Q([3,1],Up), Q([3,2],Up), Q(3,3,Left, and Q(2,3,Left), after each of ten executions of the action sequence Right, Right, Up, Up, Left, Left (starting from1, for each sequence). You may assume =1, 1, and all Q values for non-terminal states are initially zero