Answered step by step

Verified Expert Solution

Question

1 Approved Answer

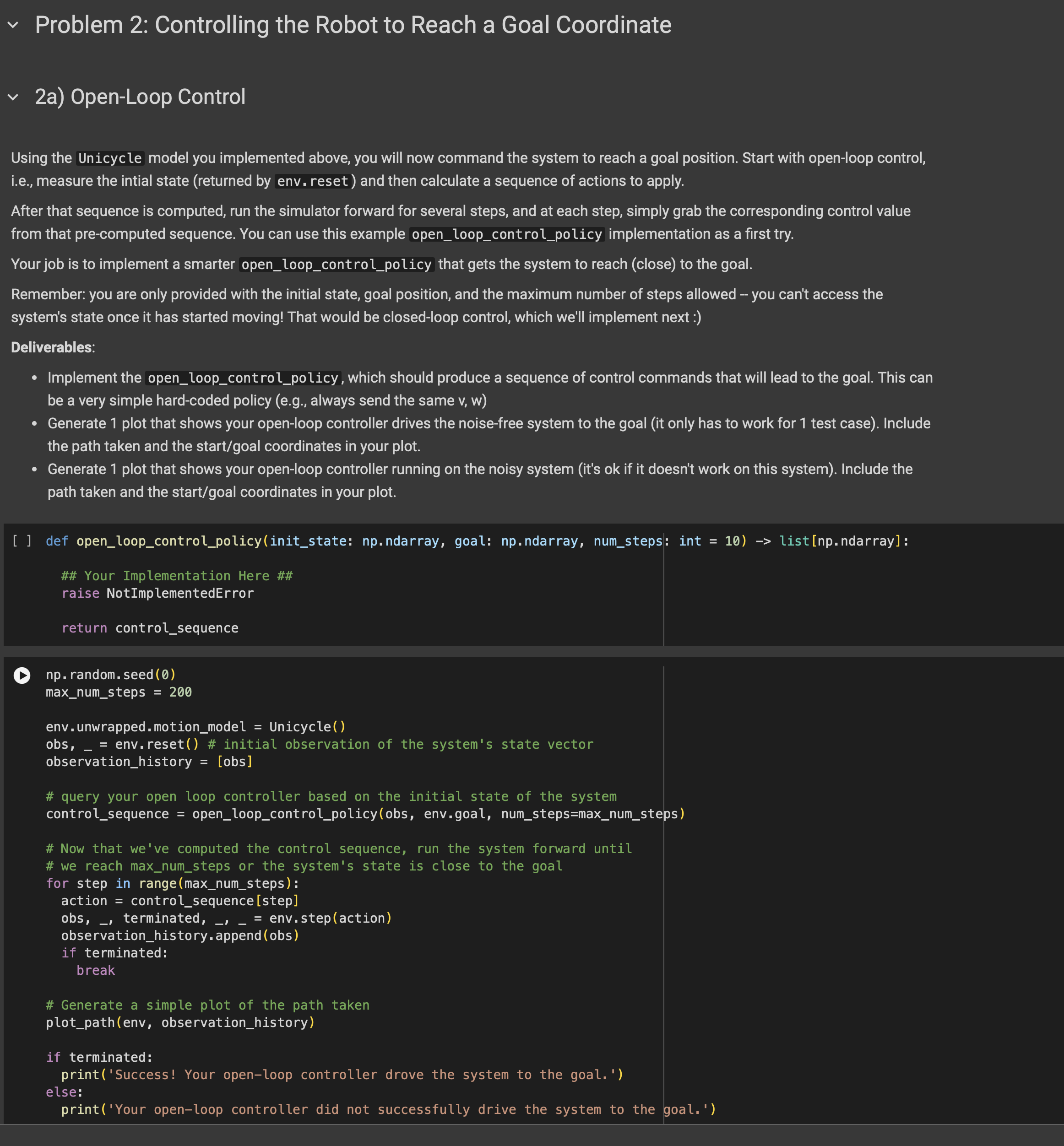

Using the Unicycle model you implemented above, you will now command the system to reach a goal position. Start with open - loop control, i

Using the Unicycle model you implemented above, you will now command the system to reach a goal position. Start with openloop control,

ie measure the intial state returned by env. reset and then calculate a sequence of actions to apply.

After that sequence is computed, run the simulator forward for several steps, and at each step, simply grab the corresponding control value

from that precomputed sequence. You can use this example openloopcontrolpolicy implementation as a first try.

Your job is to implement a smarter openloopcontrolpolicy that gets the system to reach close to the goal.

Remember: you are only provided with the initial state, goal position, and the maximum number of steps allowed you can't access the

system's state once it has started moving! That would be closedloop control, which we'll implement next

Deliverables:

Implement the openloopcontrolpolicy, which should produce a sequence of control commands that will lead to the goal. This can

be a very simple hardcoded policy eg always send the same

Generate plot that shows your openloop controller drives the noisefree system to the goal it only has to work for test case Include

the path taken and the startgoal coordinates in your plot.

Generate plot that shows your openloop controller running on the noisy system its ok if it doesn't work on this system Include the

path taken and the startgoal coordinates in your plot.

def openloopcontrolpolicyinitstate: npndarray, goal: npndarray, numsteps: int listnpndarray:

## Your Implementation Here ##

raise NotImplementedError

return controlsequence

nprandom.seed

maxnumsteps

env. unwrapped.motionmodel Unicycle

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started