Answered step by step

Verified Expert Solution

Question

1 Approved Answer

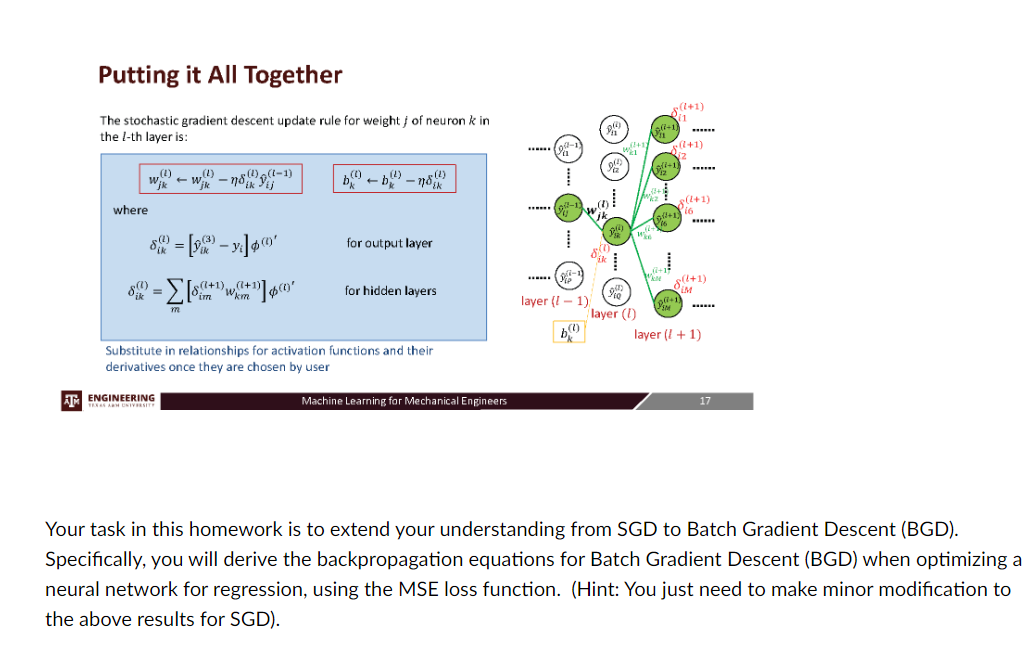

we have explored the intricate process of training neural networks, focusing on the Stochastic Gradient Descent ( SGD ) algorithm. We derived the equations and

we have explored the intricate process of training neural networks, focusing on the Stochastic Gradient Descent SGD algorithm. We derived the equations and understood their roles in optimizing the weights of the network to minimize the loss function. The results are as follows.Putting it All Together

The stochastic gradient descent update rule for weight of neuron in

the th layer is:

hat

where

for output layer

for hidden layers

Substitute in relationships for activation functions and their

derivatives once they are chosen by user

Your task in this homework is to extend your understanding from SGD to Batch Gradient Descent BGD

Specifically, you will derive the backpropagation equations for Batch Gradient Descent BGD when optimizing a

neural network for regression, using the MSE loss function. Hint: You just need to make minor modification to

the above results for SGDInstructions:

Review SGD: Start by revisiting the equations and principles we covered for SGD Understand the role of each term and how the weights are updated iteratively.

Understand BGD: Understand how Batch Gradient Descent differs from SGD

Derive Backpropagation for BGD: Using the knowledge from steps and derive the backpropagation equations for BGD

Step by Step Solution

There are 3 Steps involved in it

Step: 1

Get Instant Access to Expert-Tailored Solutions

See step-by-step solutions with expert insights and AI powered tools for academic success

Step: 2

Step: 3

Ace Your Homework with AI

Get the answers you need in no time with our AI-driven, step-by-step assistance

Get Started