Question: In the lectures, we introduced Gradient Descent, an optimization method to find the minimum value of a function. In this problem we try to solve

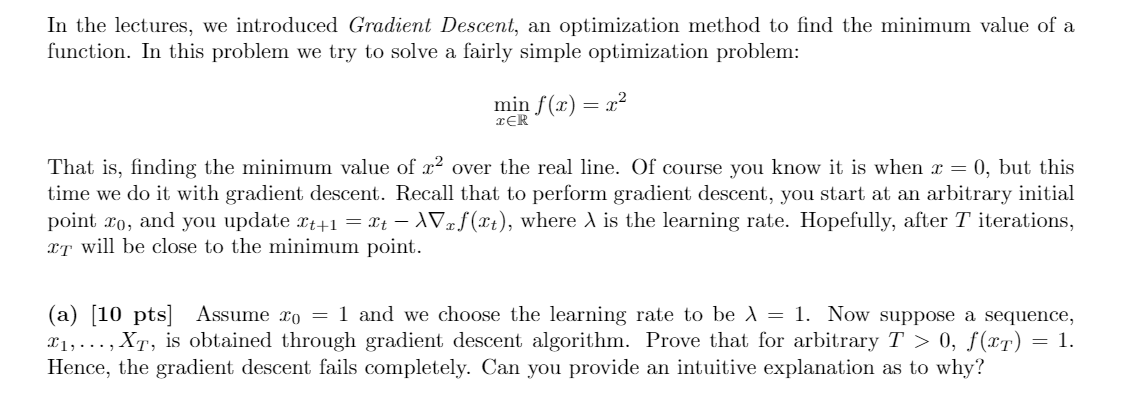

In the lectures, we introduced Gradient Descent, an optimization method to find the minimum value of a function. In this problem we try to solve a fairly simple optimization problem: min f(1) = x2 TER That is, finding the minimum value of x2 over the real line. Of course you know it is when I = 0, but this time we do it with gradient descent. Recall that to perform gradient descent, you start at an arbitrary initial point 20, and you update It+1 = It - Vef(It), where is the learning rate. Hopefully, after T iterations, It will be close to the minimum point. (a) (10 pts) Assume ro = 1 and we choose the learning rate to be l = 1. Now suppose a sequence, 11, ..., Xt, is obtained through gradient descent algorithm. Prove that for arbitrary T > 0, f(IT) = 1. Hence, the gradient descent fails completely. Can you provide an intuitive explanation as to why

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts