Question: package org.myorg; import java.io.IOException; import java.util.regex.Pattern; import org.apache.hadoop.conf.Configured; import org.apache.hadoop.util.Tool; import org.apache.hadoop.util.ToolRunner; import org.apache.log4j.Logger; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.fs.Path; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import

package org.myorg;

import java.io.IOException; import java.util.regex.Pattern; import org.apache.hadoop.conf.Configured; import org.apache.hadoop.util.Tool; import org.apache.hadoop.util.ToolRunner; import org.apache.log4j.Logger; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.fs.Path; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text;

public class WordCount extends Configured implements Tool {

private static final Logger LOG = Logger .getLogger( WordCount.class);

public static void main( String[] args) throws Exception { int res = ToolRunner .run( new WordCount(), args); System .exit(res); }

public int run( String[] args) throws Exception { Job job = Job .getInstance(getConf(), " wordcount "); job.setJarByClass( this .getClass());

FileInputFormat.addInputPaths(job, args[0]); FileOutputFormat.setOutputPath(job, new Path(args[ 1])); job.setMapperClass( Map .class); job.setReducerClass( Reduce .class); job.setOutputKeyClass( Text .class); job.setOutputValueClass( IntWritable .class);

return job.waitForCompletion( true) ? 0 : 1; } public static class Map extends Mapper

private static final Pattern WORD_BOUNDARY = Pattern .compile("\\s*\\b\\s*");

public void map( LongWritable offset, Text lineText, Context context) throws IOException, InterruptedException {

String line = lineText.toString(); Text currentWord = new Text();

for ( String word : WORD_BOUNDARY .split(line)) { if (word.isEmpty()) { continue; } currentWord = new Text(word); context.write(currentWord,one); } } }

public static class Reduce extends Reducer

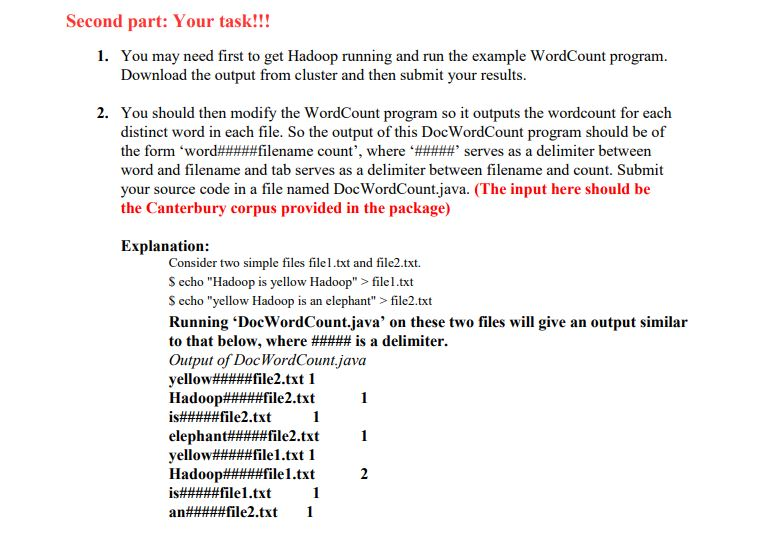

Second part: Your task!!! 1. You may need first to get Hadoop running and run the example WordCount program. Download the output from cluster and then submit your results 2. You should then modify the WordCount program so it outputs the wordcount for each distinct word in each file. So the output of this DocWordCount program should be of the form 'word#####filename count', where '#####, serves as a delimiter between word and filename and tab serves as a delimiter between filename and count. Submit your source code in a file named DocWordCount.java. (The input here should be the Canterbury corpus provided in the package) Explanation: Consider two simple files file.txt and file2.txt. S echo "Hadoop is yellow Hadoop" > filel.txt S echo "yellow Hadoop is an elephant" > file2.txt Running 'DocWordCount.java' on these two files will give an output similar to that below, where ##### is a delimiter. Output of DocWordCount java Second part: Your task!!! 1. You may need first to get Hadoop running and run the example WordCount program. Download the output from cluster and then submit your results 2. You should then modify the WordCount program so it outputs the wordcount for each distinct word in each file. So the output of this DocWordCount program should be of the form 'word#####filename count', where '#####, serves as a delimiter between word and filename and tab serves as a delimiter between filename and count. Submit your source code in a file named DocWordCount.java. (The input here should be the Canterbury corpus provided in the package) Explanation: Consider two simple files file.txt and file2.txt. S echo "Hadoop is yellow Hadoop" > filel.txt S echo "yellow Hadoop is an elephant" > file2.txt Running 'DocWordCount.java' on these two files will give an output similar to that below, where ##### is a delimiter. Output of DocWordCount java

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts