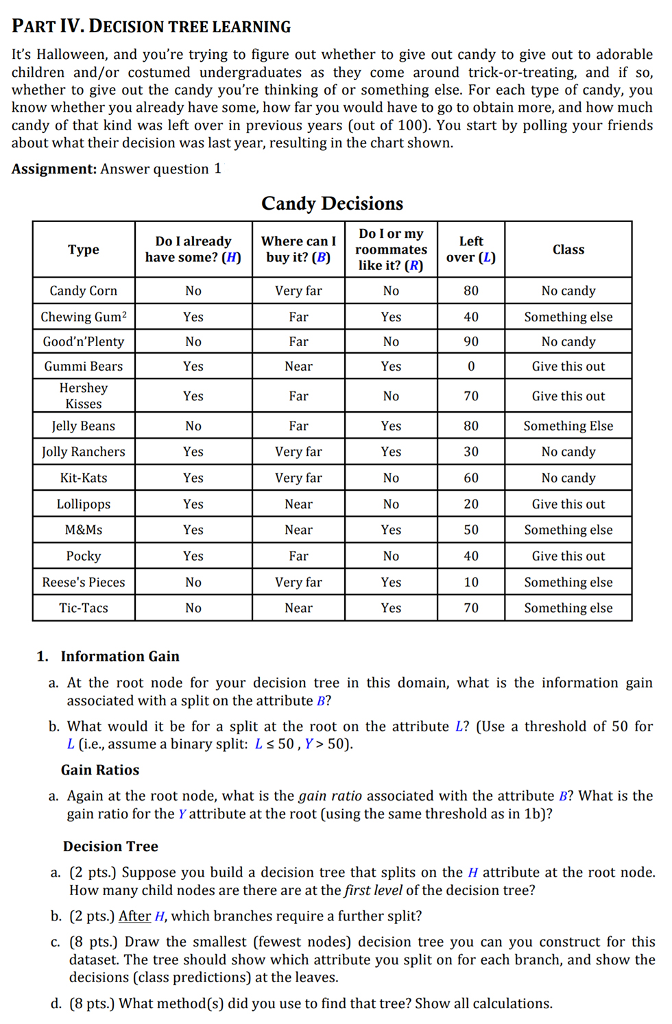

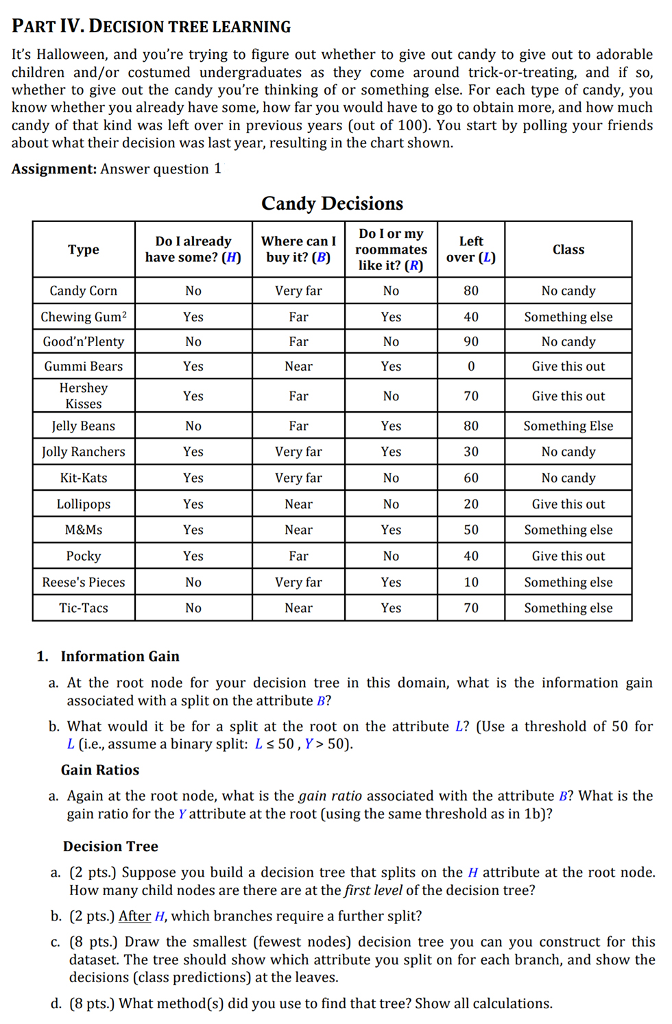

PART IV.DECISION TREE LEARNING It's Halloween, and you're trying to figure out whether to give out candy to give out to adorable children and/or costumed undergraduates as they come around trick-or-treating, and if so whether to give out the candy you're thinking of or something else. For each type of candy, you know whether you already have some, how far you would have to go to obtain more, and how much candy of that kind was left over in previous years (out of 100). You start by polling your friends about what their decision was last year, resulting in the chart shown. Assignment: Answer question 1 Candy Decisions Do I or my roommates like it? (R) Left over (L) 80 40 90 0 70 80 30 60 20 50 40 Do I already Where can I have some? (H) buy it? (B) Type Class Candy Corn Chewing Gum2 Good'n'Plenty Gummi Bears Hershey Kisses Jelly Beans Jolly Ranchers Kit-Kats Lollipops M&Ms Very far Far Far Near Far Far Very far Very far Near Near Far Very far Near No candy Something else No candy Give this out Give this out Something Else No candy No candy Give this out Something else Give this out Something else Something else 0 0 Yes Yes 0 0 Yes Yes Yes es es es Yes es Yes Yes Pocky Reese's Pieces Tic-Tacs 0 Yes No 70 1. Information Gain a. At the root node for your decision tree in this domain, what is the information gain associated with a split on the attribute B? b. What would it be for a split at the root on the attribute L? (Use a threshold of 50 for L (i.e., assume a binary split: Ls 50,Y> 50) Gain Ratios a. Again at the root node, what is the gain ratio associated with the attribute B? What is the gain ratio for the Yattribute at the root (using the same threshold as in 1b)? Decision Tree a. (2 pts.) Suppose you build a decision tree that splits on the H attribute at the root node How many child nodes are there are at the first level of the decision tree? b. (2 pts.) After H, which branches require a further split? c. (8 pts.) Draw the smallest (fewest nodes) decision tree you can you construct for this dataset. The tree should show which attribute you split on for each branch, and show the decisions(class predictions) at the leaves. d. (8 pts.) What method(s) did you use to find that tree? Show all calculations. PART IV.DECISION TREE LEARNING It's Halloween, and you're trying to figure out whether to give out candy to give out to adorable children and/or costumed undergraduates as they come around trick-or-treating, and if so whether to give out the candy you're thinking of or something else. For each type of candy, you know whether you already have some, how far you would have to go to obtain more, and how much candy of that kind was left over in previous years (out of 100). You start by polling your friends about what their decision was last year, resulting in the chart shown. Assignment: Answer question 1 Candy Decisions Do I or my roommates like it? (R) Left over (L) 80 40 90 0 70 80 30 60 20 50 40 Do I already Where can I have some? (H) buy it? (B) Type Class Candy Corn Chewing Gum2 Good'n'Plenty Gummi Bears Hershey Kisses Jelly Beans Jolly Ranchers Kit-Kats Lollipops M&Ms Very far Far Far Near Far Far Very far Very far Near Near Far Very far Near No candy Something else No candy Give this out Give this out Something Else No candy No candy Give this out Something else Give this out Something else Something else 0 0 Yes Yes 0 0 Yes Yes Yes es es es Yes es Yes Yes Pocky Reese's Pieces Tic-Tacs 0 Yes No 70 1. Information Gain a. At the root node for your decision tree in this domain, what is the information gain associated with a split on the attribute B? b. What would it be for a split at the root on the attribute L? (Use a threshold of 50 for L (i.e., assume a binary split: Ls 50,Y> 50) Gain Ratios a. Again at the root node, what is the gain ratio associated with the attribute B? What is the gain ratio for the Yattribute at the root (using the same threshold as in 1b)? Decision Tree a. (2 pts.) Suppose you build a decision tree that splits on the H attribute at the root node How many child nodes are there are at the first level of the decision tree? b. (2 pts.) After H, which branches require a further split? c. (8 pts.) Draw the smallest (fewest nodes) decision tree you can you construct for this dataset. The tree should show which attribute you split on for each branch, and show the decisions(class predictions) at the leaves. d. (8 pts.) What method(s) did you use to find that tree? Show all calculations